Securing our codebase with autonomous agents

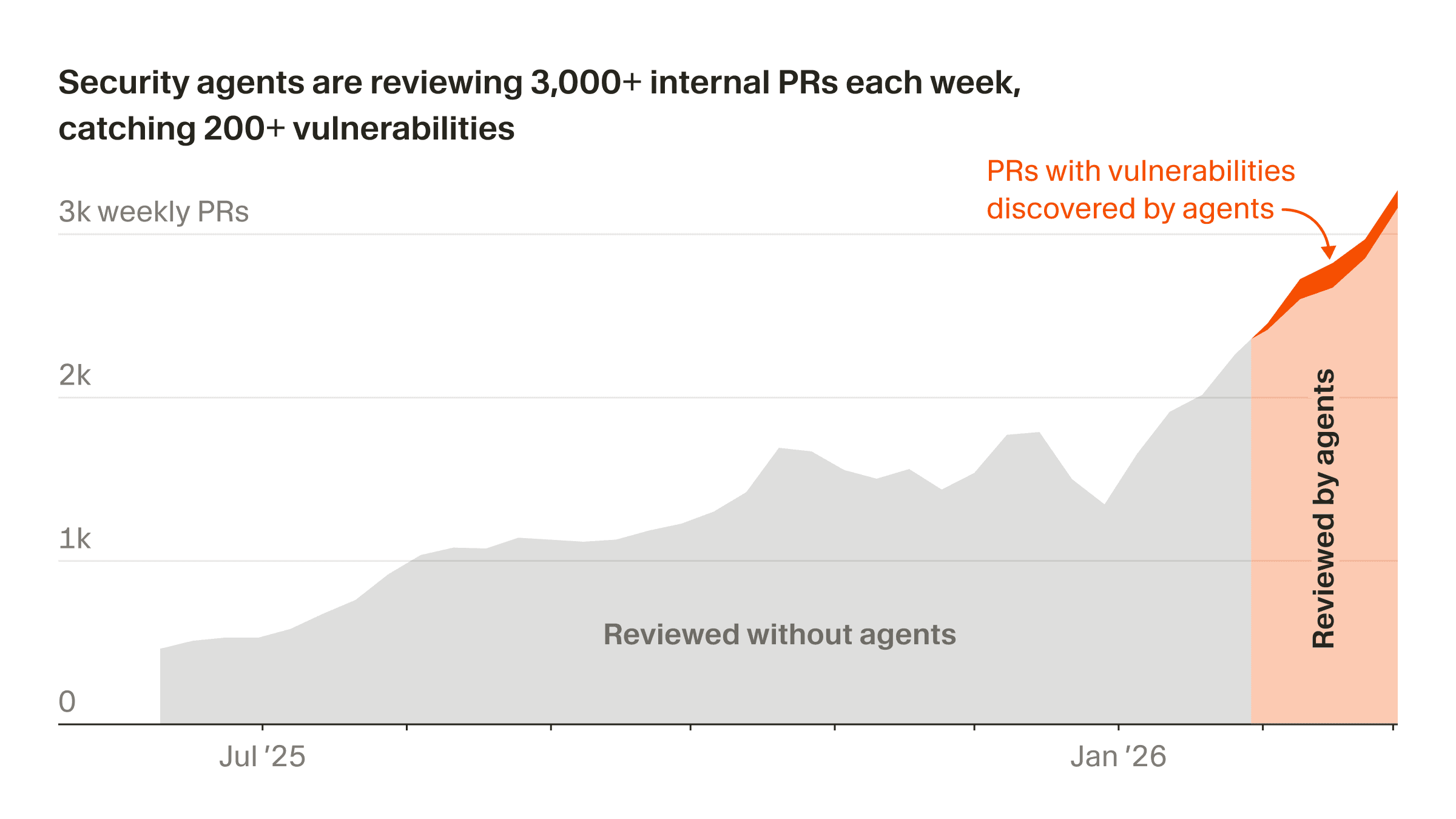

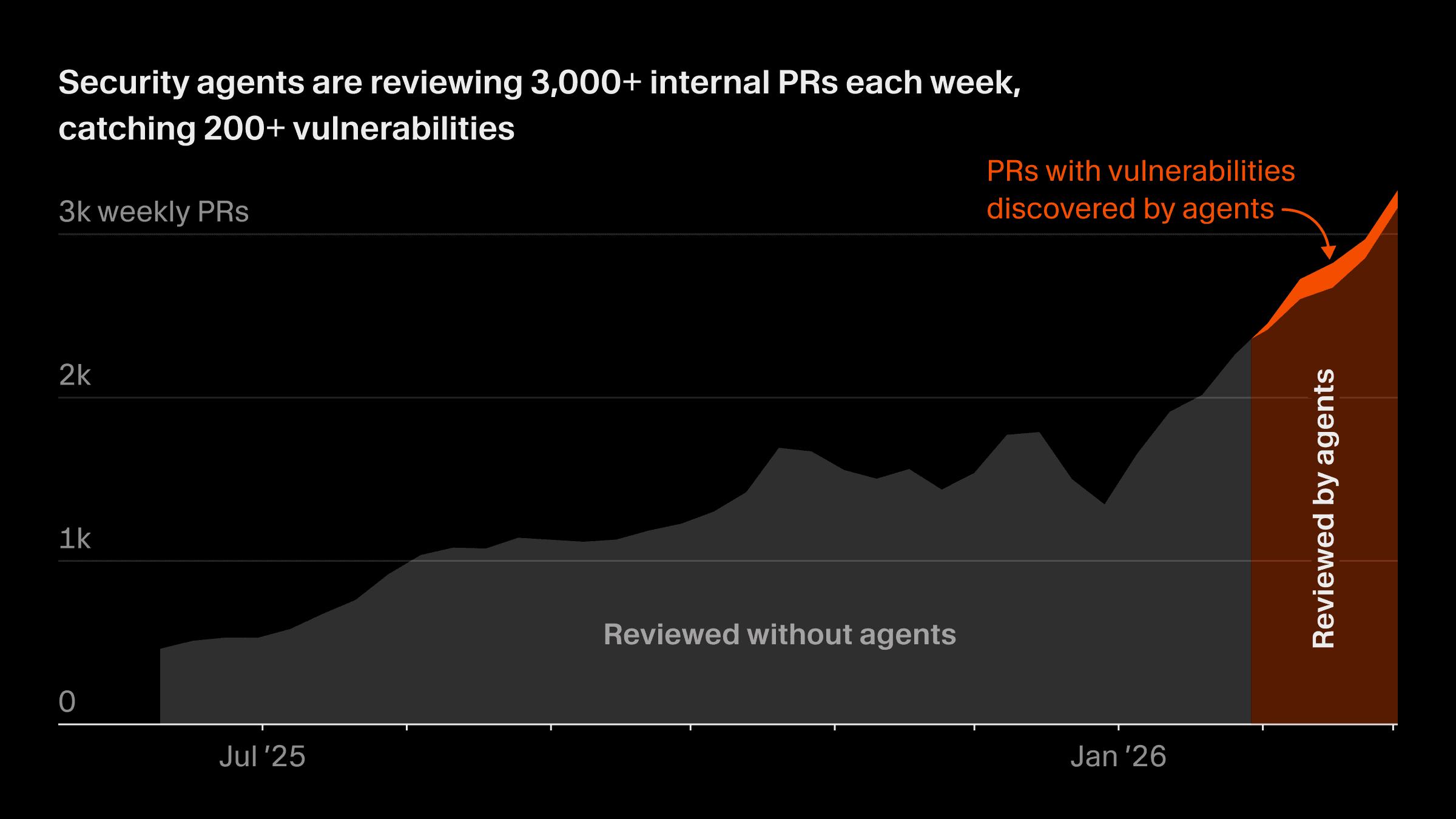

Over the last nine months, our PR velocity has increased 5x. Security tooling based on static analysis or rigid code ownership remains helpful, but is not enough at this scale. We've adapted by using Cursor Automations, which has allowed us to quickly build a fleet of security agents that continuously identify and repair vulnerabilities in our codebase.

Today, we're releasing four new automation templates with the exact blueprints of the security agents we've found to be most helpful. Other security teams can customize these templates to build agents that automatically resolve a wide range of security issues.

The automations architecture

For agents to be useful for security, they need two features, both of which Cursor Automations provides.

The first is out-of-the-box integrations for receiving webhooks, responding to GitHub pull requests, and monitoring codebase changes. This allows agents that operate in the background to know when to step forward and take action.

The second is a rich agent harness and environment. Automations are powered by cloud agents, which gives them all the tools, skills, and observability that cloud agents have access to.

To make automations more powerful for security-specific use cases, we built a security MCP tool and deployed it as a serverless Lambda function, available just-in-time when needed, and not otherwise running.

The MCP, whose reference code is available here, serves three purposes:

-

Persistent data. The agent uses the MCP to store data, so we can track and measure security impact over time. We use that data to continually refine when and how we trigger automations.

-

Deduplication. We run multiple review agents on every change, and because their findings are generated by an LLM, different agents can end up using different words to describe the same underlying issue. To avoid duplicate work, the MCP allows the agent to deploy a classifier powered by Gemini Flash 2.5 that determines when two semantically distinct findings describe the same problem.

-

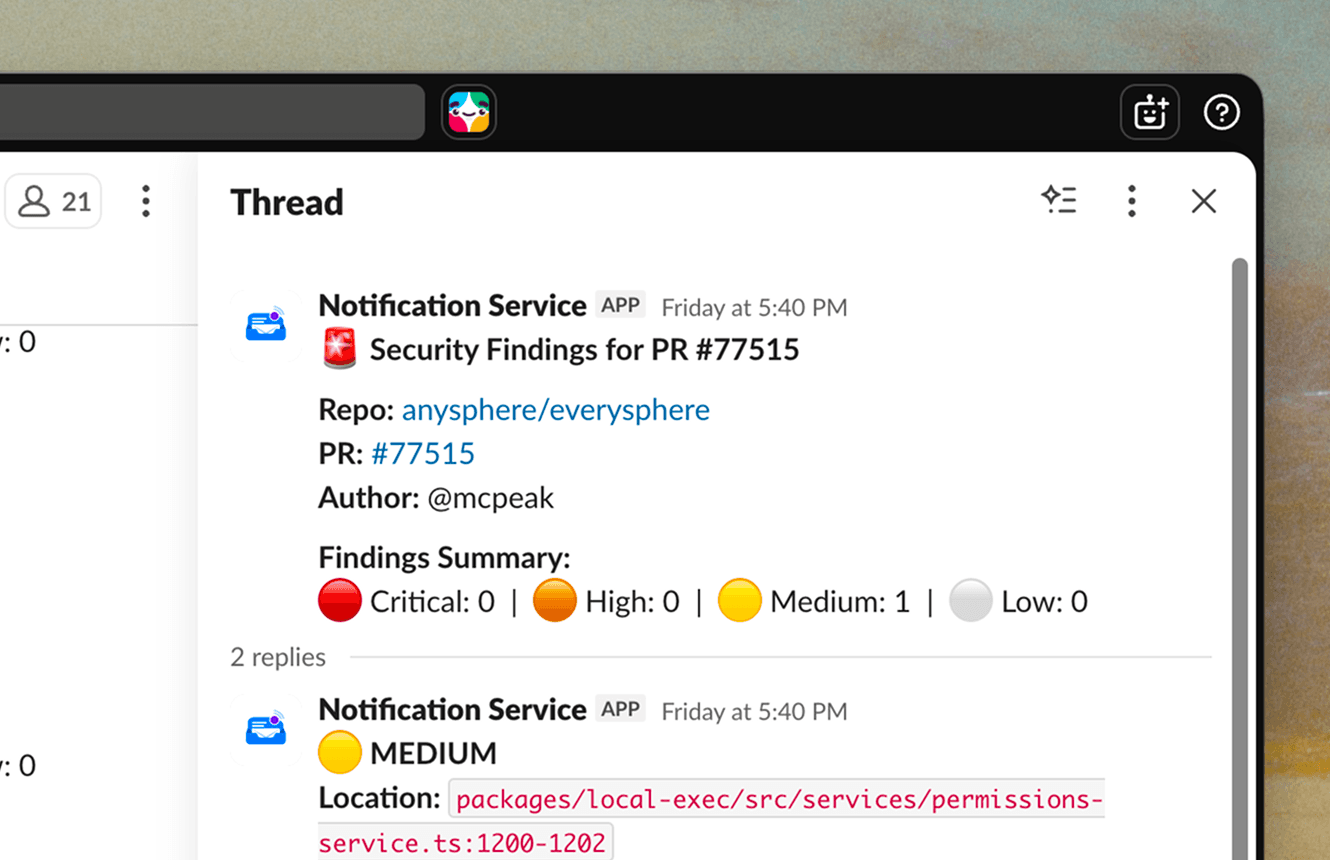

Consistent output. Agents report every vulnerability they find through the MCP, which sends consistently formatted Slack messages and handles further actions like dismissing or snoozing a finding.

With this foundation in place, the four security automations detailed below layer on their own workflows and trigger logic. We use Terraform to ensure that all changes to security tooling go through a standard review and deployment process.

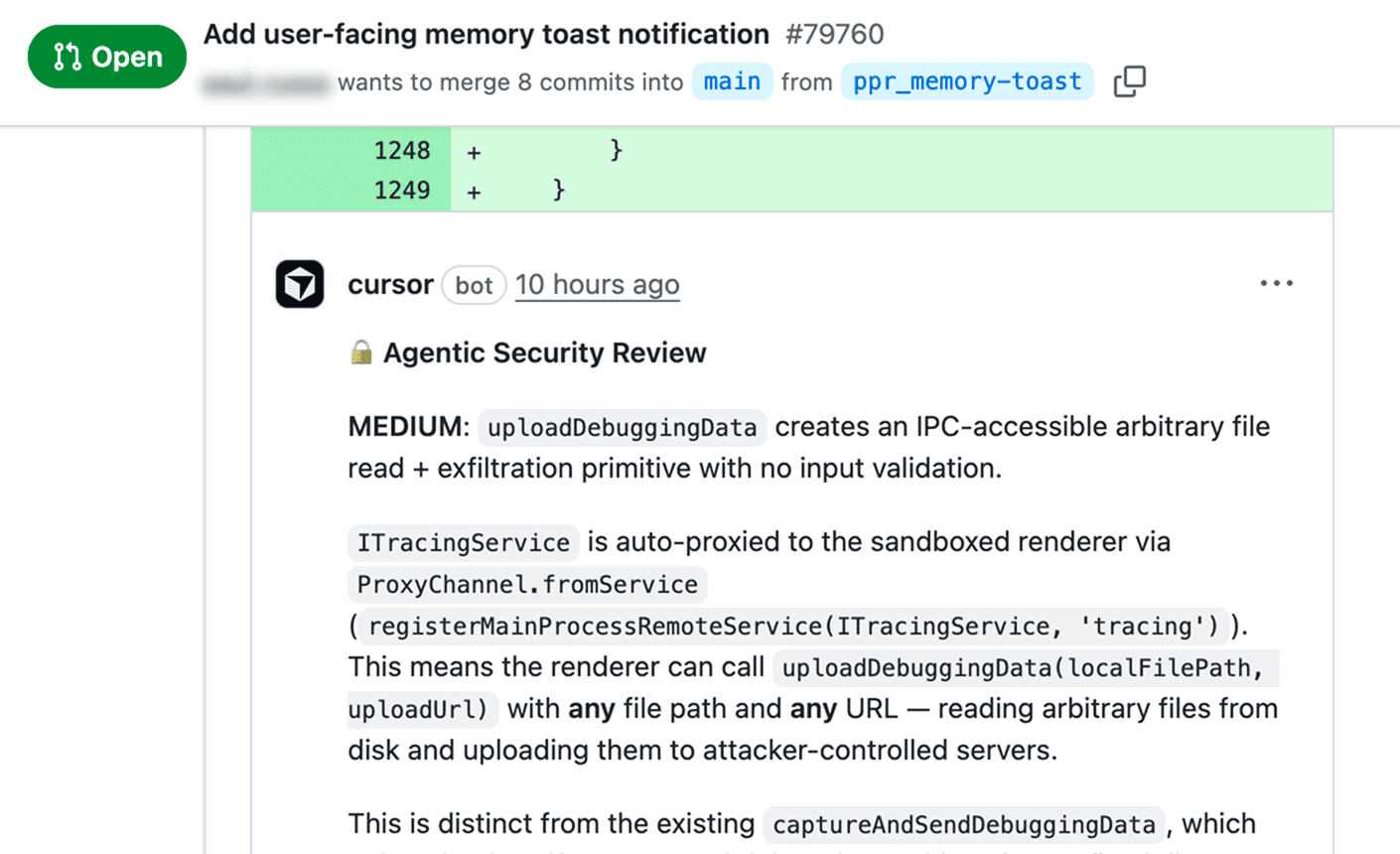

Agentic Security Review

Internally, we were already using Bugbot to review PRs for code quality and general issues, including some security findings. But a general-purpose review tool isn't ideal for security because it can't be prompt-tuned to our specific threat model, and because we needed the ability to block CI on security findings specifically, without blocking on every general code quality issue.

Given that, we built a dedicated automation we call Agentic Security Review. Initially we had it forward its findings to a private Slack channel monitored by our security team.

Once we were confident it was identifying genuine issues, we turned on PR commenting, then implemented a blocking gate check. In the last two months, Agentic Security Review has run on thousands of PRs and prevented hundreds of issues from reaching production.

Vuln Hunter

After the success of Agentic Security Review on new code, we pointed agents at the existing codebase. Vuln Hunter is an automation that divides the code into logical segments and searches each one for vulnerabilities. Our team triages findings and typically fixes them, often using @Cursor from Slack to generate PRs.

Anybump

Dependency patching is so time intensive that most security teams eventually give up and push it to engineering, where it sits in backlogs. We created an automation called Anybump that has entirely automated nearly all of it.

Anybump runs reachability analysis to narrow vulnerabilities to those that are actually impactful, then traces through the relevant code paths, runs tests, checks for breakage, and opens a PR once tests pass. After the PR is merged, Cursor's canary deployment pipeline provides a final safety gate before anything reaches production.

Invariant Sentinel

Invariant Sentinel runs daily to monitor for drift against a set of security and compliance properties. It divides the repo into logical segments and spins up subagents to validate code against a list of invariants.

After analysis, the agent compares current state against previous runs using the automations memory feature. If it detects drift, it revalidates to ensure correctness, then updates its memory and sends a Slack report to the security team with a description of the change and specific code locations as evidence.

Because this automation runs in a full development environment, the agent can write and execute code to validate its own assumptions, complementing traditional functional, unit, and integration tests.

More automations to come

Security is full of opportunities to apply automations, and these four are just the beginning of the work we plan to do. We're already extending them to encompass vulnerability report intake, privacy compliance monitoring, on-call alert triage, and access provisioning.

In each case, agents give us coverage and consistency at a scale we couldn't achieve manually.